Binary cross entropy12/7/2023

It also computes the score that deals with the probability based on the distance from the expected value. In this section, we will learn about the PyTorch Binary cross entropy with logits in python.īinary cross entropy contrasts each of the predicted probability to actual output which can be 0 or 1. Read: Keras Vs PyTorch – Key Differences PyTorch Binary cross entropy with logits

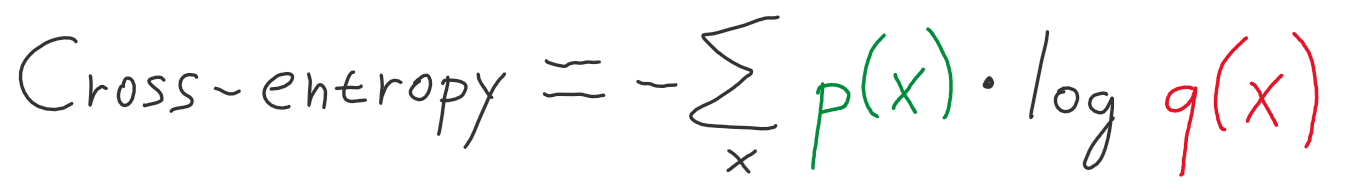

Output_prob = loss(x(input_prob), target_prob)Īfter running the above code, we get the following output in which we can see that the binary cross entropy value is printed on the screen. Input_prob = torch.randn(4, requires_grad=True) print(output_prob) is used to print the output probability on the screen.output_prob = loss(x(input_prob), target_prob) is used to get the output probability.target_prob = torch.empty(4).random_(3) is used to calculate the target probability.input_prob = torch.randn(4, requires_grad=True) is used to calculate the input probability.loss = nn.BCELoss() is used to calculate the binary cross entropy loss.x = nn.Sigmoid() is used to ensure that the output of the unit is in between 0 and 1.In the following code, we will import the torch module from which we can calculate the binary cross entropy. It is also used for calculating the error of reconstruction. The norm is created which calculates the binary cross entropy between the target and input probabilities. In this section, we will learn about how to implement binary cross entropy with the help of an example in PyTorch. Read PyTorch Model Summary PyTorch Binary cross entropy example ‘sum’ is defined as the given output will be summed.‘mean’ is defined as the sum of the output will be divided by the number of element in the output.‘none’ is defined as no reduction will be applied.reduction state the reduction applied to the output: ‘none’, ‘mean’, ‘sum’.reduce The losses are mean observations for every minibatch depending upon the size_average.size_average The losses are averaged over every loss element in the batch.weight A recomputing weight is given to the loss of every element.The following syntax of Binary cross entropy in PyTorch: torch.nn.BCELoss(weight=None,size_average=None,reduce=None,reduction='mean) It creates a norm that calculates the Binary cross entropy between the target probabilities and input probabilities. In this section, we will learn about the PyTorch binary cross entropy in python. PyTorch Binary cross entropy pos_weight.PyTorch Binary cross entropy loss function.PyTorch Binary cross entropy with logits.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed